Gemini Omni Review: Google’s New AI Video Model Explained

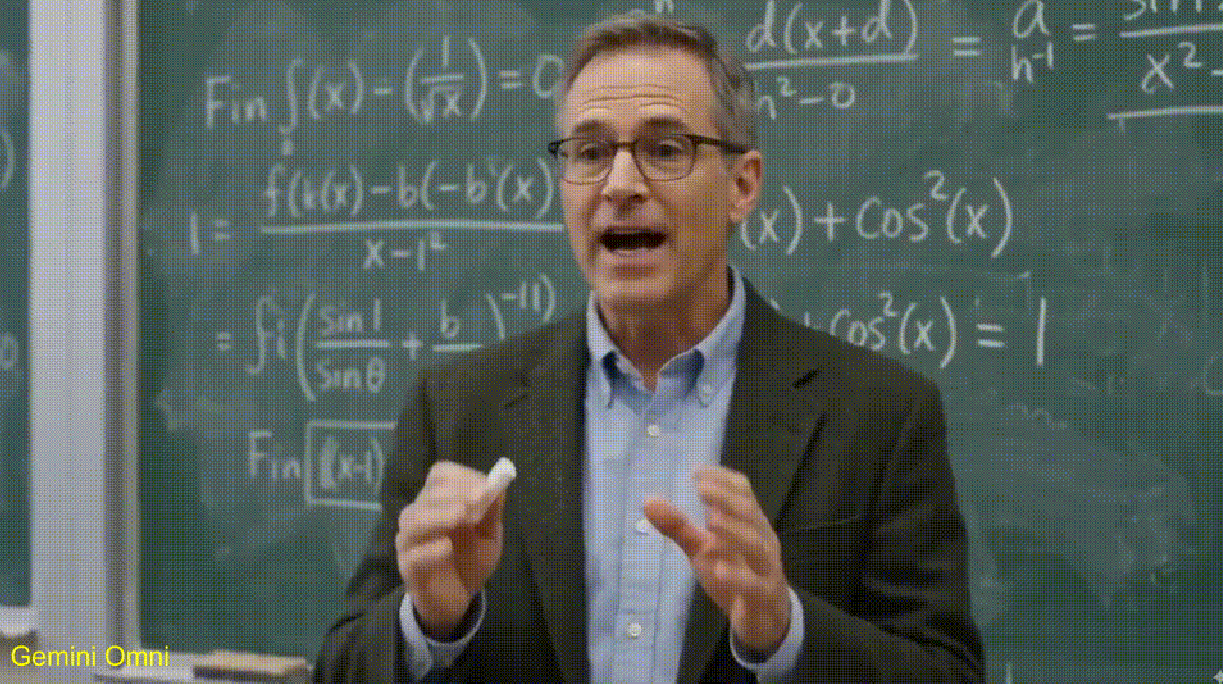

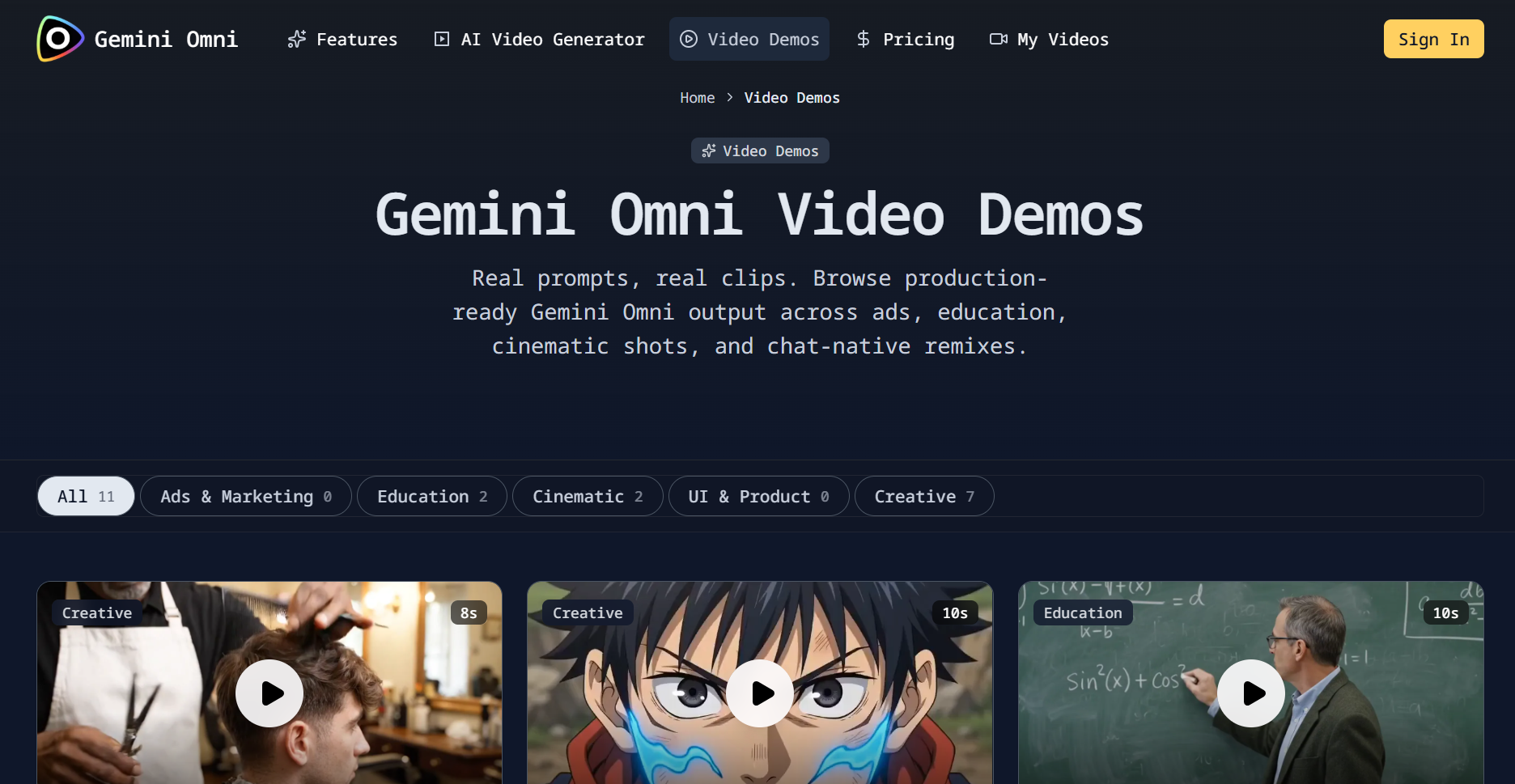

If you have been anywhere near AI video Twitter (X) or creator forums in mid-May 2026, you have seen the same two clips circulate: a professor writing trigonometry on a chalkboard, and a cinematic seaside dinner with two friends sharing spaghetti.

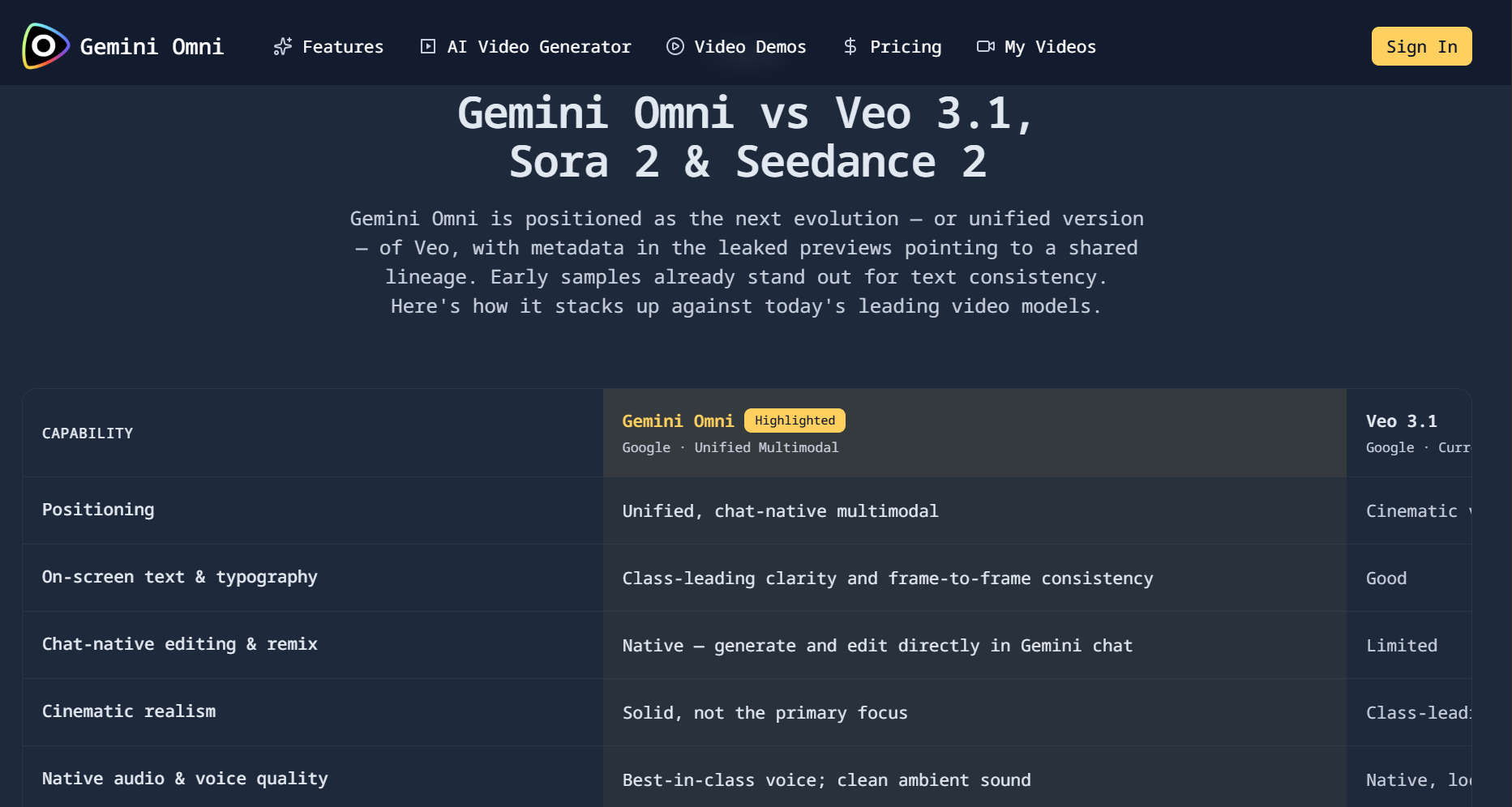

Commentators disagree on whether the footage represents a true leap beyond today’s best models, but they agree on the headline: Google’s consumer AI stack is signaling a new video chapter, often labeled “Omni” in metadata and in-app copy, even though Google has not shipped a formal product brief with that name. Until that brief lands, treat “Omni” as a moving target: a Gemini Omni video model story in headlines may still be packaging, routing, or a genuinely new capability stack.

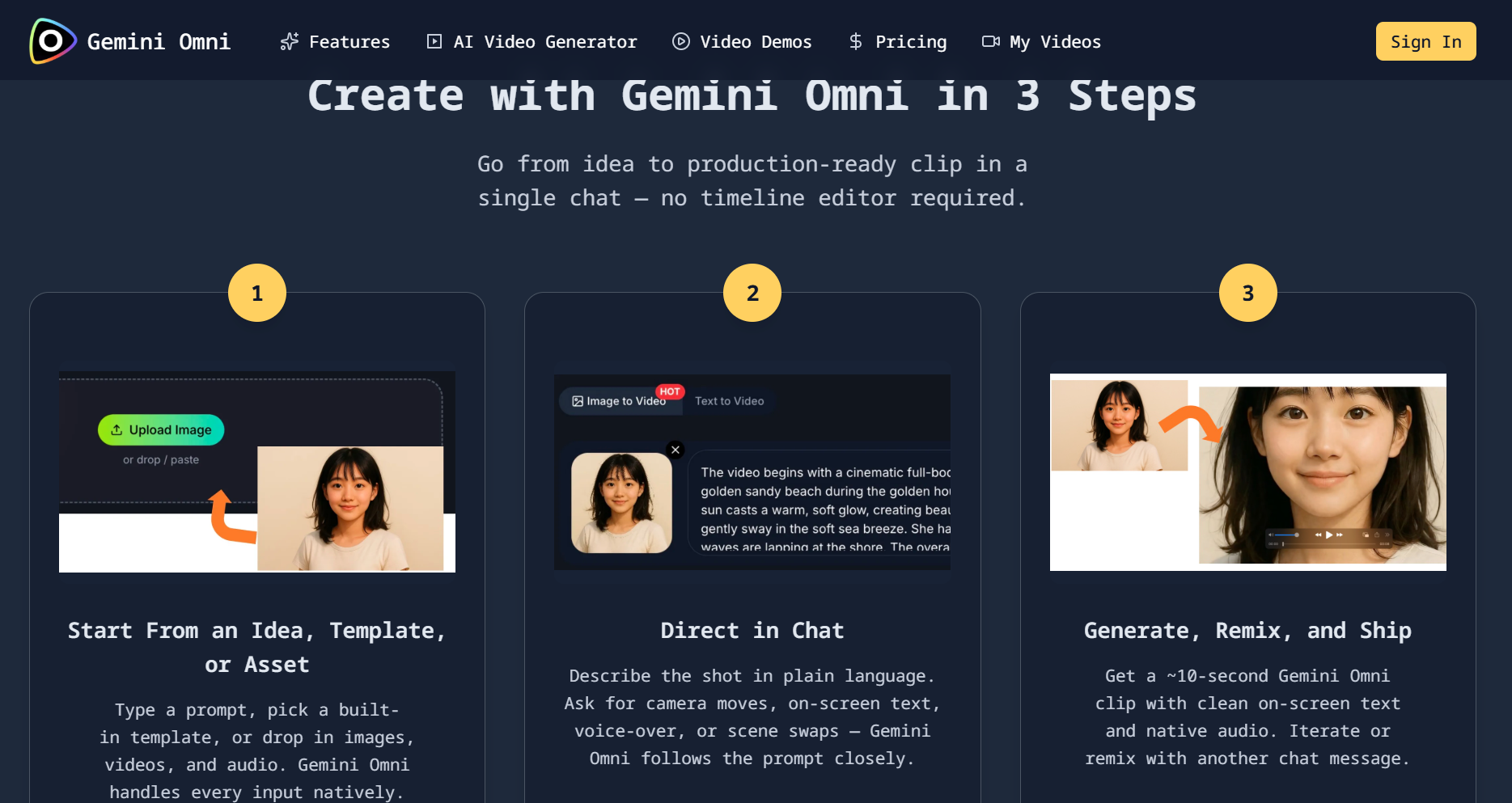

This article is a trend and narrative analysis: what the evidence suggests about where AI video is going, why incumbents are racing toward edit-in-chat and remix workflows, and what a prudent creator or product team should assume before Google I/O 2026. Practically, that means asking whether your stack can already support a Gemini Omni video generator-style loop—prompt, preview, revise—without waiting for a keynote to validate the workflow.

Why “Omni” is trending now and why naming matters

Three forces collide in this story.

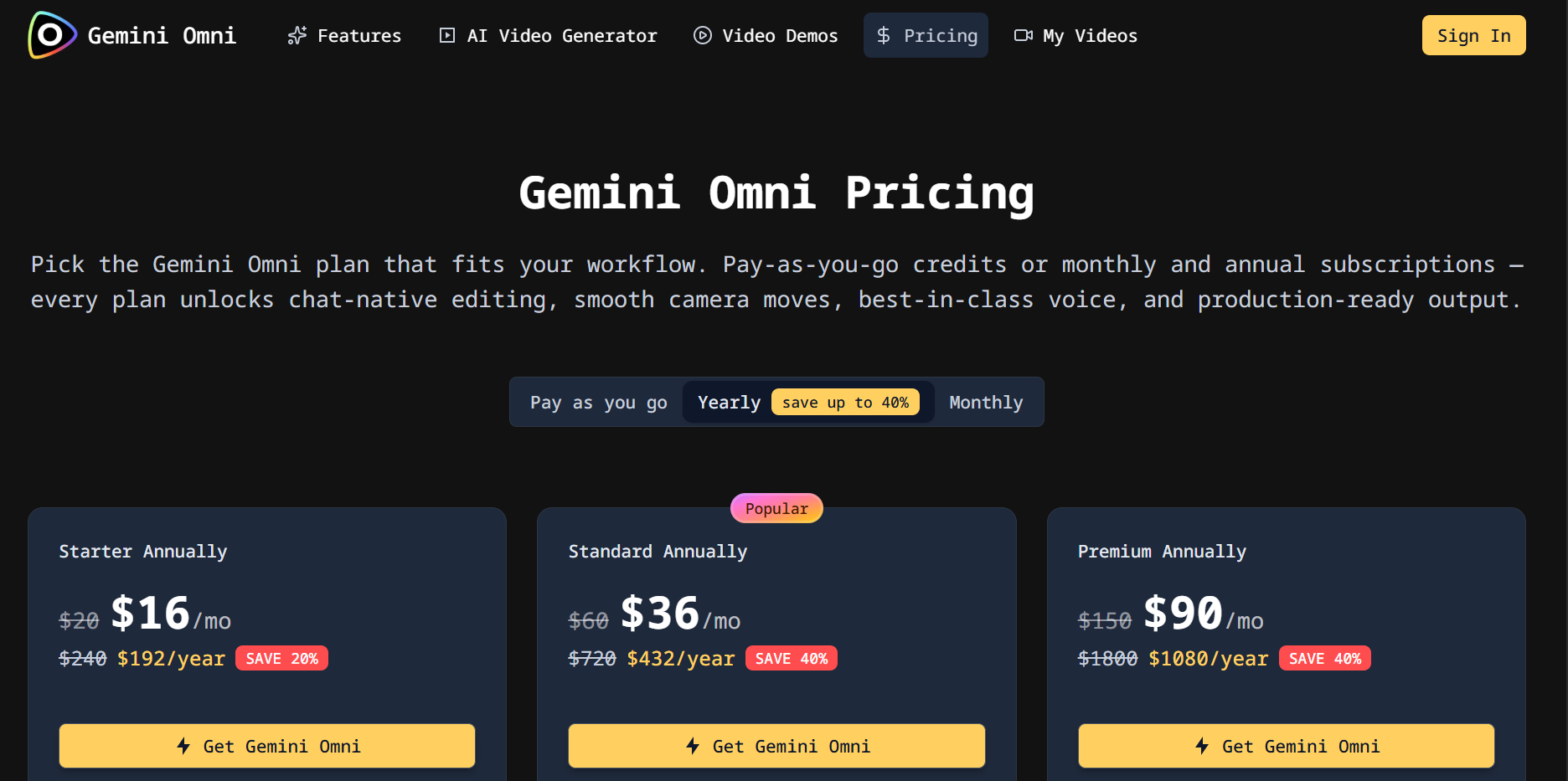

First, video is the most expensive modality to serve at quality, which means every “new model” rumor is also a rumor about pricing, caps, and enterprise packaging. Early tester anecdotes already point to aggressive consumption of daily quotas on paid tiers when running short generative clips, which matches what outlets like Android Authority summarized from community reports.

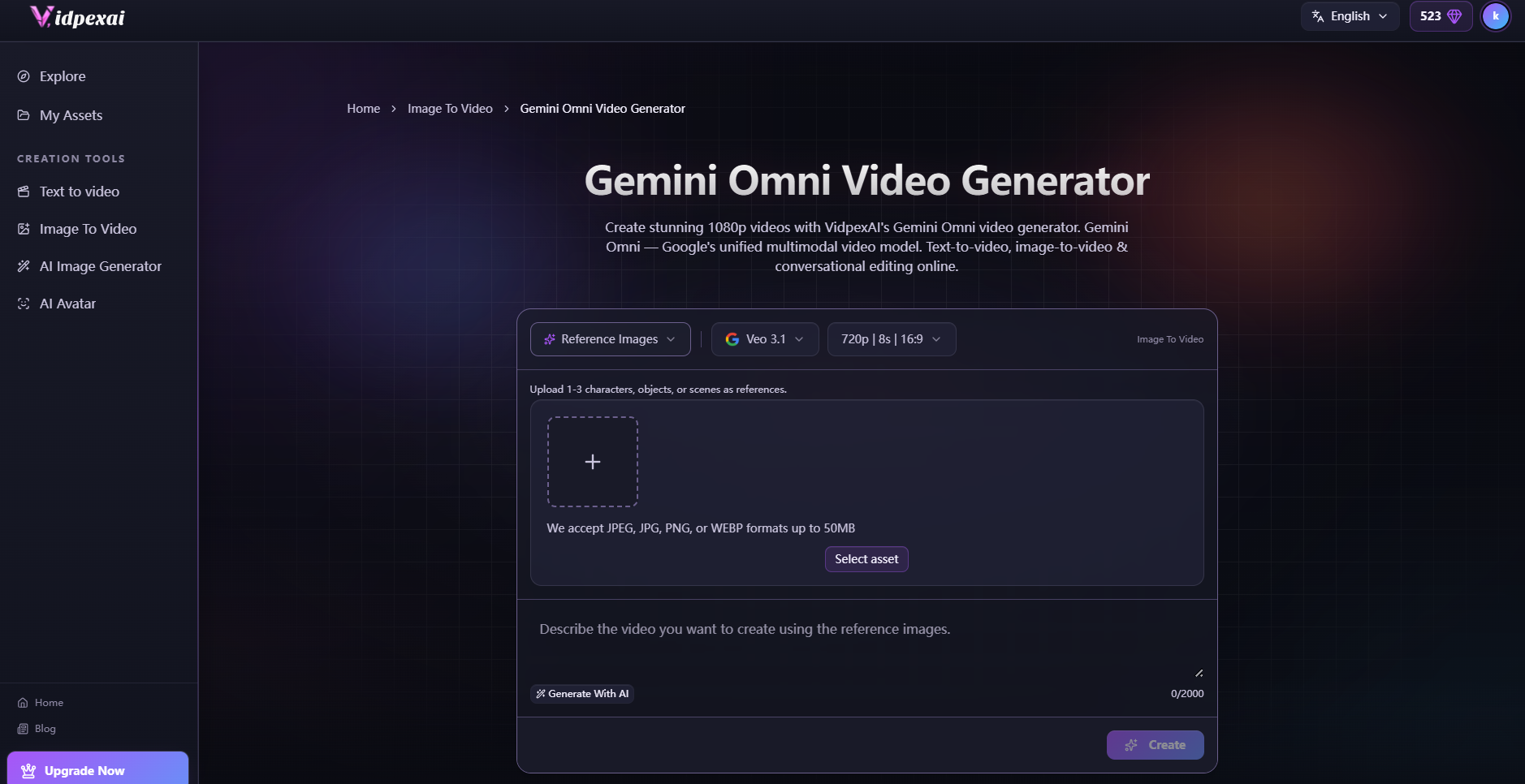

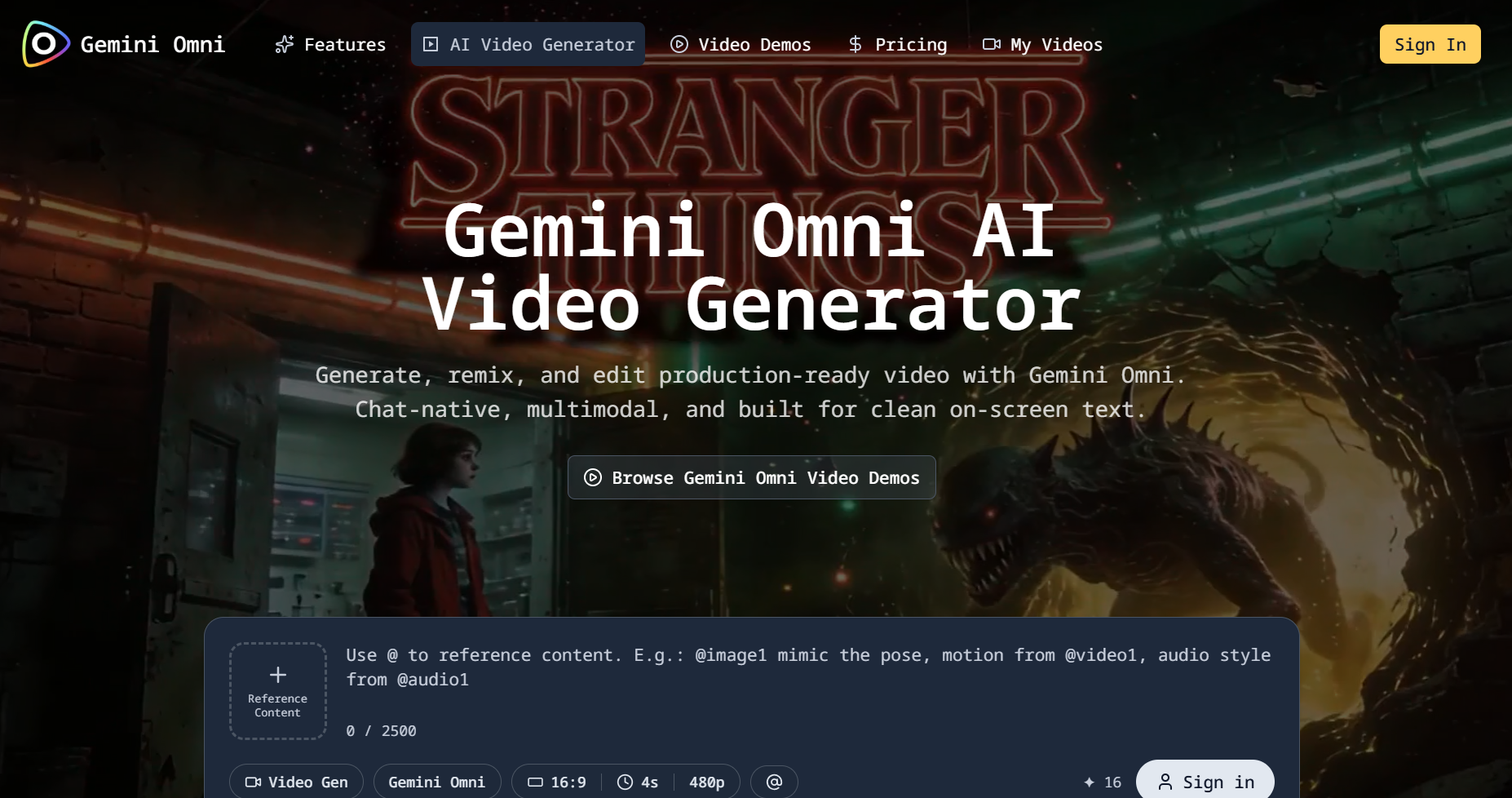

Second, Google’s Gemini app is becoming the default front door for mainstream users who do not think in terms of “Veo” or “API routes.” If Google introduces a new consumer label, it is less about laboratory taxonomy and more about a narrative users can repeat: one assistant, many outputs. If you want to stress-test the same “one assistant, many outputs” loop today—text or image in, short video out—you can run it end-to-end in a browser workflow as the Gemini Omni video generator on VidpexAI (multi-reference uploads, fast iteration, download when you are happy with the cut).

Third, creator culture now evaluates models through meme-grade stress tests (spaghetti scenes, chalkboard math, handshake micro-gestures) because those tests expose failure modes that marketing sizzle reels avoid. That is exactly the analytical frame used in independent video commentary on the leaked clips.For short-form teams, the real question is whether a Gemini Omni video maker workflow can survive those meme tests in production—not just in a launch montage.

What public evidence actually shows

Wave 1: In-product copy as a staging signal

Reporting from TestingCatalog and others highlighted user-visible language in Gemini’s video area suggesting templates and an “Omni”-labeled pipeline adjacent to existing Veo-backed flows. In mature product organizations, copy changes in live surfaces often precede pricing and policy changes. That does not guarantee a launch date, but it is a stronger signal than a random repo commit.

Wave 2: Demos, metadata tags, and community forensics

Outlets documented the “Create with Gemini Omni” style prompts and shared early outputs, including the chalkboard mathematics scenario. Treat circulating gemini omni video demos as signals of what the market wants to believe—then validate the same scenarios on your own prompts, seeds, and upload constraints. Separately, creators on YouTube walked frame-by-frame through what impressed them (handwriting fidelity) versus what still looked synthetic (facial micro-animation, object permanence during eating scenes), which is valuable because it reframes the story from hype to reproducibility.In plain product language, those leaks read like early positioning for a Gemini Omni AI video generator experience: fast clips, meme-grade stress tests, and immediate social distribution. Those frame-by-frame breakdowns are useful precisely because they turn viral gemini omni video moments into a checklist of failure modes you can score on your own briefs.

Important methodological note: until Google publishes reproducible access, latency distributions, and guardrail documentation, all public comparisons are anecdotal. That caveat applies even if the gemini omni model is real and strong: without reproducible access, “better” is mostly a vibes metric. They are still useful for trend forecasting because they show which dimensions the market will use to judge “S-tier” video in late 2026: text stability in frame, multi-agent blocking in scenes with utensils and food, lip sync and dialogue clarity, and camera grammar across cuts.

Three plausible interpretations of “Omni”

Scenario A: Consumer rebrand and packaging around Veo-class engines

If “Omni” is primarily positioning, the competitive landscape does not change overnight; pricing and distribution do. In that world, “gemini omni 1”-style labels may simply mark a first-wave routing string—not a guarantee of a new physics engine under the hood. Incumbent platforms still win on workflow depth (templates, timelines, brand kits, batch generation).

Scenario B: A Gemini-native video stack parallel to Veo

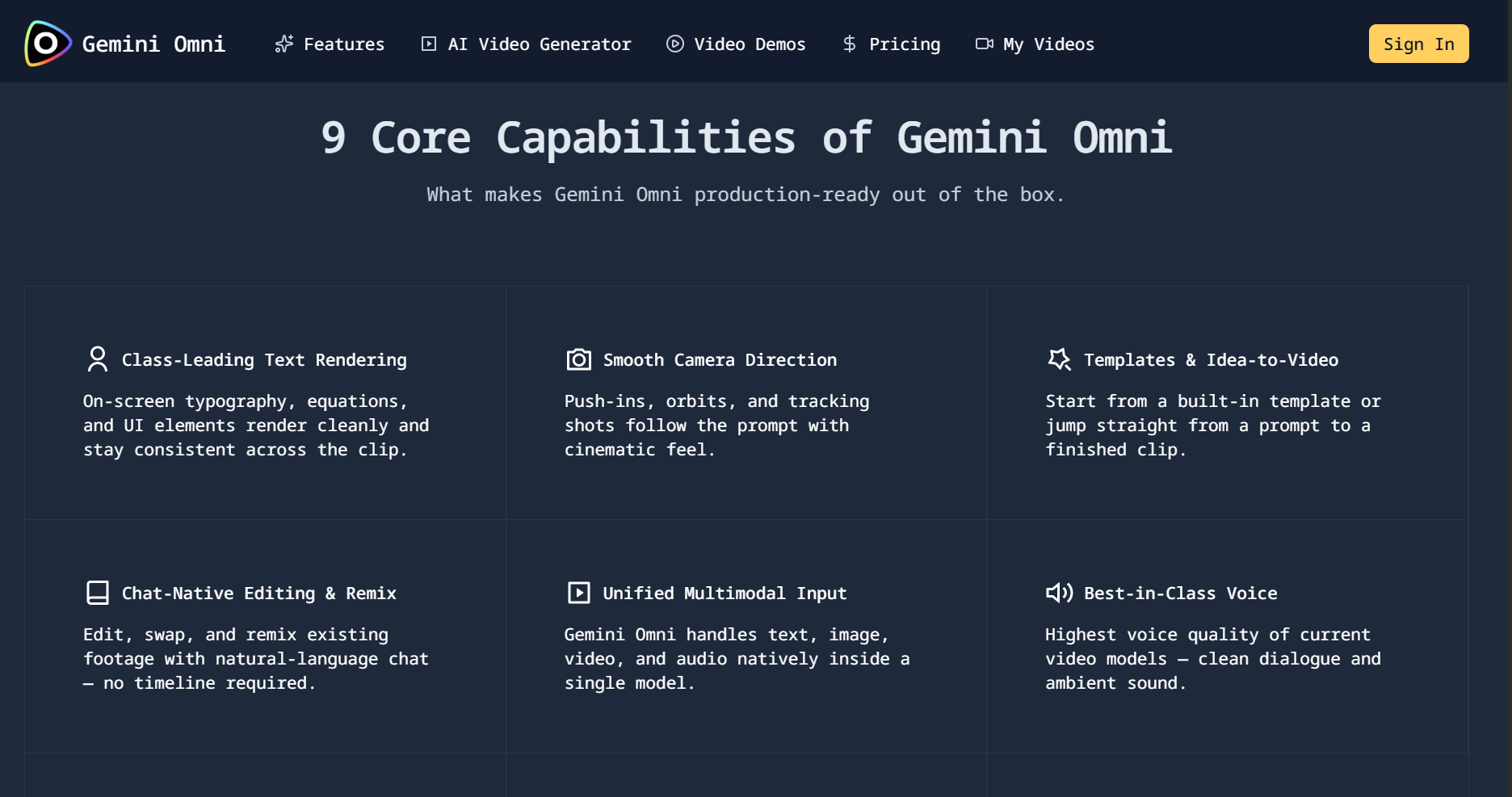

If Omni is a distinct track optimized for assistant-native editing, the trend is conversational iteration: users treat video like a document that can be revised by chat. That would pressure standalone editors to expose similar natural-language operation layers, not just better pixels. If that assistant-native path wins, many teams will stop comparing timelines and start comparing the quality of a Gemini Omni video editor layer: how reliably chat turns into a usable cut.

Scenario C: A genuine “omni” modality unification

If the name is not marketing fluff and Google moves toward one model class that spans text, images, audio, and video with tighter coupling, then third-party creative suites must decide whether they compete on model diversity (best-of-breed routing) or vertical integration (single vendor simplicity). Most of the market will likely choose hybrid routing: one UX, many backends.

What the viral demos imply for 2026 product strategy

Regardless of which scenario is true, the demos and commentary outline four durable trends for the next 12–18 months.

1) From “one-shot clip” to “session-based creation”If remixing and chat edits land in mainstream Gemini, the winning products will optimize for short feedback loops: regenerate a segment, not the entire timeline.That shift elevates Gemini Omni video creation from a novelty feature into an operational requirement: shorter cycles beat prettier one-shots when you are shipping weekly.

2) Text-in-video becomes a first-class evaluation metricEducation, finance, healthcare marketing, and technical influencers all need legible numerals and symbols. The chalkboard clip went viral because it touches a real commercial pain point: explaining concepts on camera without a studio.

3) Audio and dialogue raise the ceiling faster than resolutionCreators now judge outputs on mouth shape, plosives, room tone, not only pixels. That pushes vendors to bundle dialogue models, music, and SFX into unified packages.

4) Governance becomes a product featureRemixing user-supplied media drags platforms into IP, likeness, and provenance territory. Expect more visible disclosures, watermarking debates, and enterprise “safe modes” bundled into pricing tiers.

What prudent teams should do this month

If you run a content org, a marketplace, or a creative SaaS product, treat Omni as a schedule risk and a UX research signal, not as a guaranteed dependency.

- Run the same creative brief across two or three stacks and score outputs on dimensions you actually ship (SKU readability, human skin stability, hands interacting with objects, spoken line intelligibility).

- Instrument your own usage economics the way Gemini users are suddenly noticing quota burn: video is a credit furnace.

- Design for model swapability so you are not locked into a single vendor narrative the week before a conference keynote.

A light note on all-in-one creative platforms

The long-run user need is not “the biggest model,” but predictable production: fast iteration, sensible defaults, and access to multiple engines as each vendor spikes on different prompt classes.

That is the problem space VidpexAI targets as an integrated workspace for AI video, image, and digital-human workflows—text or image in, short-form visuals out—aimed at teams who want cinematic results without traditional editing overhead. Start here: Gemini Omni video generator.

If you are evaluating vendors, compare routing flexibility, credits, and iteration UX, not only headline demos.

Google I/O 2026: a practical watchlist

The fastest way for the market to get clarity is simple: Google Gemini Omni needs explicit definitions—consumer name, model family, developer surface—rather than leaked strings alone. When the keynote narrative unfolds, these are the questions that turn rumor into strategy:

- Is “Omni” a named consumer tier, a model family, or both?

- Does Google publish duration limits, resolutions, and regional availability in the same breath?

- Is upload-and-remix available broadly, or gated?

- What are API paths, pricing, and rate limits for developers?

- How does Google position Omni against ByteDance Seedance, OpenAI, and open-weights ecosystems—on quality, price, or integration?

FAQ

Is the Gemini Omni video model the same as Veo, or a separate track?

Public chatter mixes both. Until Google publishes a capability matrix, treat “Gemini Omni video model” as a positioning and routing label that may sit alongside—or wrap—Veo-class engines, especially inside the Gemini app experience.

What should I evaluate first in a Gemini Omni AI video generator workflow?

Prioritize what you ship: text-in-frame stability, hands/objects (food, utensils), lip sync and dialogue clarity, camera grammar across cuts, and quota burn per 10s clip—these are the dimensions the 2026 creator benchmarks keep surfacing.

Does a Gemini Omni video editor workflow replace timelines entirely?

Not for every team. The durable trend is session-based iteration: regenerate a segment, branch variants, and remix with chat-style prompts—then export to a traditional editor only if compliance or finishing demands it.

What production scenarios fit Gemini Omni video creation best today?

Short explainers, on-screen typography, product showcases, and rapid A/B social variants—cases where speed beats perfect micro-expression, provided you validate legibility and brand safety on your own content.

Who benefits most from a Gemini Omni video maker style pipeline?

Marketing and short-form teams that need tight feedback loops and repeatable briefs, plus educators or technical creators where equations, labels, and numerals must remain readable.

How should I interpret leaked or viral gemini omni video demos?

Treat them as stress tests, not benchmarks: they reveal which failure modes the market cares about, but they are not substitutes for reproducible latency, guardrails, and regional availability from official docs.

Will there be tiers like gemini omni 1 / gemini omni pro for quotas and quality?

Pricing stories in 2026 usually bundle resolution, duration, remix/upload rights, and enterprise “safe modes.” Assume tiered caps until Google confirms naming; instrument your own credits the same way you would for any video backend.

Is “Google Gemini Omni” safe to build into product copy before I/O?

Use language that matches what your UI and API routes actually expose, disclose preview risk, and avoid implying a canonical Google product name unless documentation matches—especially for domains, endpoints, and compliance.

Can I use outputs from a third-party Gemini Omni video generator for ads?

Only under that vendor’s terms plus your territory’s marketing rules. For commercial use, verify likeness, IP on uploads, music rights, and disclosure requirements before scaling spend.

Ethan Brooks

Ethan Brooks leads AI videos and AI avatar workflows. With 12+ years specializing in generative AI, Ethan has produced AI-driven campaigns for global brands and tested every major model. He writes about prompts and future of visual storytelling.